Users’ interests are multi-faceted: recommendation models should be too

A new approach to calibrating recommendations to user interests

Users’ interests are multi-faceted and representing different aspects of users’ interest in their recommendations is an important factor for recommender systems to help users navigate more quickly to items or content they may be interested in. This property is often referred to as the calibration problem and has achieved significant attention recently. Calibration is particularly important given that a sole optimization towards accuracy can often lead to the user’s minority interests being dominated by their main interests, or by a few overall popular items, in the recommendations they receive. In this work, we propose a novel approach based on a minimum-cost flow through a graph for generating accurate and calibrated recommendations.

Calibration in Recommender Systems

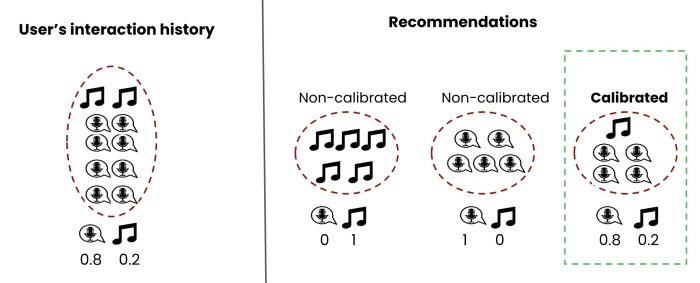

Recommender systems often optimize for the most relevant items to the user. Suppose a user has listened to a lot of pop music, some jazz music and also some podcasts. The recommender algorithm may return a list of recommendations that are all pop music (ignoring other types of music and also ignoring podcasts) or a list containing all music (ignoring podcasts) as those might be the most relevant to the user according to the objective function. Would such a recommendation list be useful to the user? Calibration in recommendation refers to the fact that a good recommendation list should reflect various aspects of the user’s interest ideally in the right proportion.

Below we can see a hypothetical user’s history of streaming on Spotify. We can see that it contains 80% podcast and 20% music. On the right side, we see three different recommendation lists generated for that user. The list shown inside of the green dashed line, represents the most calibrated recommendations as it reflects various interests of the user in the right proportion.

In their paper, Steck proposes a “greedy” (in machine learning, greedy approaches operate iteratively and they pick the best available choice for a given problem at any step to approximate the optimal solution without trying to find the absolute optimal solution) approach [2] that, starting from an empty list, iteratively adds Item i from a pool of candidates to the recommendation list such that it maximizes the following equation:

An item will be added that can make the recommended list most relevant and also least miscalibrated (in other words most calibrated). The algorithm continues until the list reaches its final size. P and Q are the distribution of different content categories (e.g., different movie or music genres, different audio types such as music or podcast.) in the user’s profile and in the recommended list, respectively. λ is a parameter that controls how much importance we give to each of the relevance and miscalibration components.

Calibration as a Minimum-Cost Flow Problem

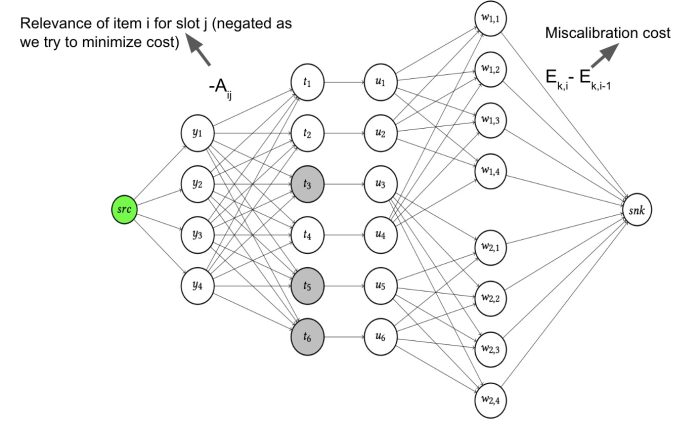

In our work, we propose a new approach to solve the calibration problem based on the concept of minimum-cost flow [1]. We propose a novel approach for calibrated recommendations by modeling this problem via a graph where costs associated with connecting adjacent nodes are derived from the above equation. Our goal is to send n successful flows through the graph with n being the size of the recommendations. Here, we aim to minimize the cost of a flow going through our graph network (as opposed to maximizing a score) and hence we use the negative of each of the components (negative of relevance and miscalibration). The figure below shows an example of a flow graph we construct when we have 6 candidate items (t1…t6) belonging to a certain category (in this case we have two categories denoted by white and gray colors) from which we want to extract a list of recommendations of size 4 (y1…y4).

W nodes represent the categories (we have two categories here W1 and W2) and items belonging to the corresponding category are connected to that node type via an edge.

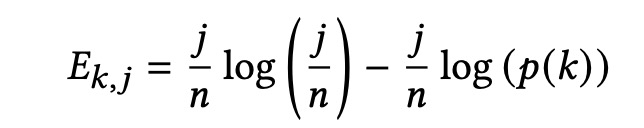

A flow starts from the source node (src) and traverses through the network via the edges and reaches the sink (snk) node. The cost of traversing from ynodes to a particular ti node is the negative of the relevance of candidate item ti , and the cost of traversing from a wk,i node to the sink node is Ek,j -Ek,j-1 where Ek,j is defined as follows:

The cost on other edges are all zero. Here n is the size of the recommended list and j is the number of times category k appears in the list. As an example, the final cost of traversing the graph from the source node all the way to the sink node by visiting nodes y1, t2, u2, w1,3 would be (1-λ) * (-A2,1)+ λ* (E1,3 -E1,2 ) and λ is the weight parameter we control. The algorithm needs n flows (in the example of the graph above n=4) to be sent from the source node to the sink node traversing via different yi nodes as the size of the final recommendation list is n.

Empirical Evaluation

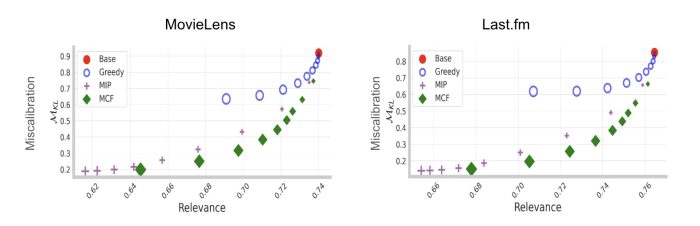

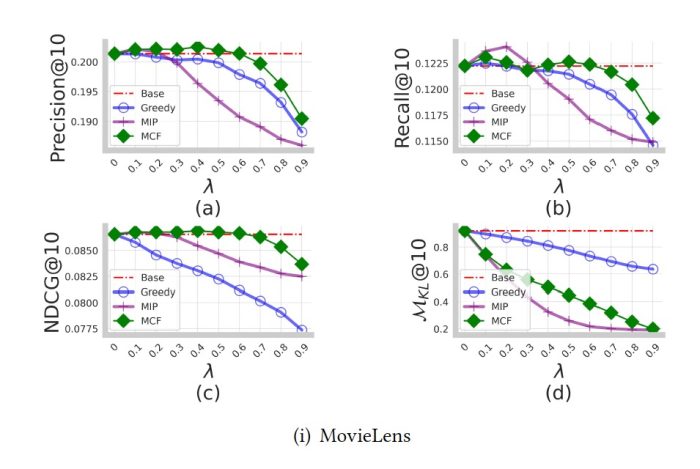

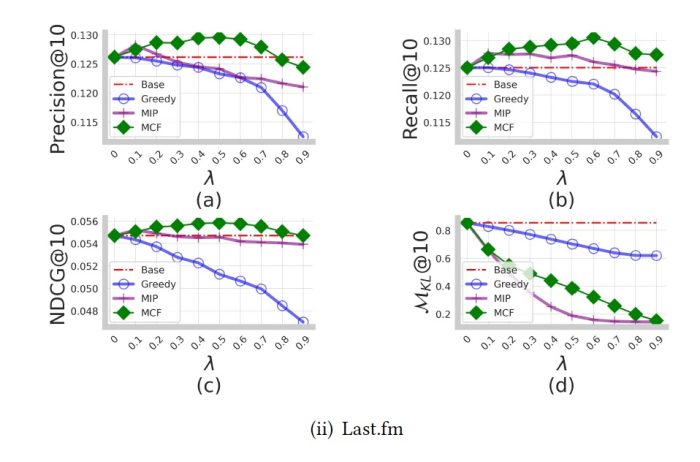

We tested our approach on two different publicly available datasets (MovieLens and Last.fm) and compared its performance to two other previous approaches for generating calibrated recommendations (A greedy approach (Greedy) which is the approach proposed by Steck [2] and a Mixed Integer Programming approach (MIP) proposed by Seyman et. al [3]). The recommendations generated by a base algorithm with no calibration are denoted as Base in the plots below.

These plots show the relevance of the recommendations versus their miscalibration degree for different values of λ. Our proposed approach based on Minimum Cost Flow (MCF) achieves the best trade-off between relevance and miscalibration. This means for every value of λ, none of the existing approaches has both better relevance and lower miscalibration.

We also compared our approach with the existing algorithms in terms of common ranking metrics such as precision, recall, and NDCG. Results can be shown in the plot below, and indicate that our approach consistently achieves better ranking performance for most of the λ values.

Conclusion

Calibration in recommendation—and generally giving a diverse set of choices to the user that best reflects their various aspects of their interest—is an important property of a recommendation algorithm. We’ve shown that a novel approach based on the minimum-cost flow problem can outperform existing techniques in generating relevant and calibrated recommendations.

For more details about the algorithm and the experiments please refer to the following paper:

Calibrated Recommendations as a Minimum-Cost Flow Problem Himan Abdollahpouri, Zahra Nazari, Alex Gain, Clay Gibson, Maria Dimakopoulou, Jesse Anderton, Benjamin Carterette, Mounia Lalmas, Tony Jebara WSDM 2023

References

[1] https://en.wikipedia.org/wiki/Minimum-cost_flow_problem [2] Steck, Harald. "Calibrated recommendations." RecSys 2018. [3] Seymen, Sinan, Himan Abdollahpouri, and Edward C. Malthouse. "A constrained optimization approach for calibrated recommendations." RecSys 2021